Posted: 8/18/2025

Choudhury leading explainable AI project in agriculture

By Ronica Stromberg

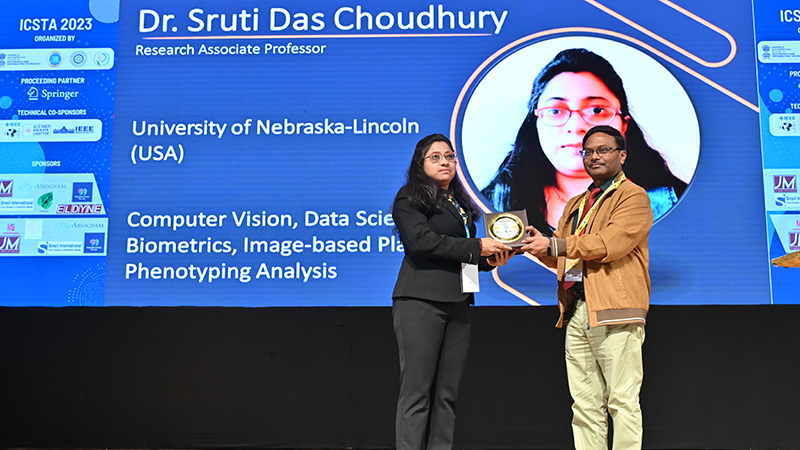

University of Nebraska–Lincoln professor Sruti Das Choudhury is developing artificial intelligence that can give farmers the reasoning behind its answers.

Scientists like Das Choudhury use farm data and artificial intelligence to recommend important farming decisions, but how can farmers trust those decisions? Farmers can see the information entered into AI and can see the answer or recommendation AI spits out, but they cannot see how AI came to that decision or whether it is just hallucinating.

Das Choudhury is leading two School of Natural Resources projects related to this, "Explainable AI for Precision Agriculture: A Data-Driven Approach to Crop Recommendation" and "Explainable Artificial Intelligence for Phenotype-Genotype Mapping Using Time-Series Data Analytics."

In the first project, her team aims to make AI explain its decisions and what factors influenced those decisions most. For example, if AI is asked to recommend a crop to plant in a field and is fed about 50 pieces of information about the field, like pH level, rainfall and temperature range, explainable AI will reveal which factors influenced its decision most and to what extent.

"We will have an answer, an explanation of the output of the model, and we can verify that explanation with the existing knowledge of the farmers," Das Choudhury said.

Explainable AI has been used in other fields, but if Das Choudhury's two projects are successful, she will be one of the first to use it for neural-network-based phenotype-genotype mapping using a realistic time-series multimodal image dataset. She said she expects explainable AI to build farmers’ trust in its answers.

"That's the idea, like deeper insight into the predictions that AI model is doing, and if we can do that, that will make the model more transparent, interpretable and trustworthy and will adhere to the ethical aspects of AI," she said.

Working with her on the project are Sanjan Baitalik and Rajashik Datta, two senior undergraduate students at the Institute of Engineering and Management in Kolkata, India. The team started the research in January 2025 and already has begun seeing results.

"We achieved results quite fast, honestly," Das Choudhury said. "Like, we submitted a paper in early August because we did it at a rapid pace."

All of the scientists have been working on the project as volunteers because they have been unable to secure funding. Das Choudhury said she hopes that once they have an established groundwork and preliminary results, that will strengthen their applications for funding. She has been seeking grants to compensate the students beyond the opportunity to learn from her.

Baitalik said that although he had explored and studied different AI interpretability techniques in coursework before the project, he had not had the chance to apply them in real-life problems. In the project, he has been applying explainable AI techniques such as LIME and SHAP and working with a large agricultural dataset.

"Applying these methods in a practical context helped deepen my comprehension of their utility and limitations,” he said. “It also gave me the opportunity—thanks to Dr. Das Choudhury—to contribute to cutting-edge research."

The other student on the project, Datta, has focused on developing and evaluating machine learning models for crop classification and pattern recognition. She has used algorithms such as K-means, DBSCAN and Gaussian Mixture Models along with deep neural networks to better interpret patterns related to crops. She also used TensorBoard to visualize training and testing dynamics and clustering behavior.

"Beyond technical growth, the project has improved my ability to communicate complex model behavior in ways that are interpretable to non-technical users, which is an essential skill in AI development for impactful applications," she said.

Das Choudhury said her goal is to build a team of AI scientists to work on applying explainable AI in different fields such as agriculture. She has started four explainable AI projects in agriculture, including the one using AI to recommend crops to plant.

She has developed a machine learning model to predict a crop’s genotype from its phenotypes. She said she would like to use explainable AI to ensure the model is predicting the right output. Phenotypes are visible traits, like leaf shape and plant height, but a plant’s genotype is its complete set of genetic material that can influence its phenotypes. Environment, nutrition and other factors can also influence phenotypes.

Alongside this AI research, Das Choudhury has proposed a semester-long course, "Artificial Intelligence, Computer Vision and Data Analytics for Agriculture and Natural Resources," to be offered through the School of Natural Resources and the Department of Biological Systems Engineering. The course would include a couple of units on explainable AI.

Das Choudhury said successful research of explainable AI in agriculture would be a novel contribution that would make AI’s ethical aspects more apparent to users.

“It would help farmers understand why an AI system makes certain predictions or decisions rather than having to just accept the decisions blindly," she said.